I’m testing Undetectable AI’s Humanizer to make AI-written content pass detection tools, but I’m getting mixed results and worried about penalties or detection. Has anyone used it long-term, and did it actually stay undetected and safe for SEO and publishing? I’d really appreciate honest experiences and any tips before I rely on it for important projects.

Undetectable AI review from someone who spent too long poking at it

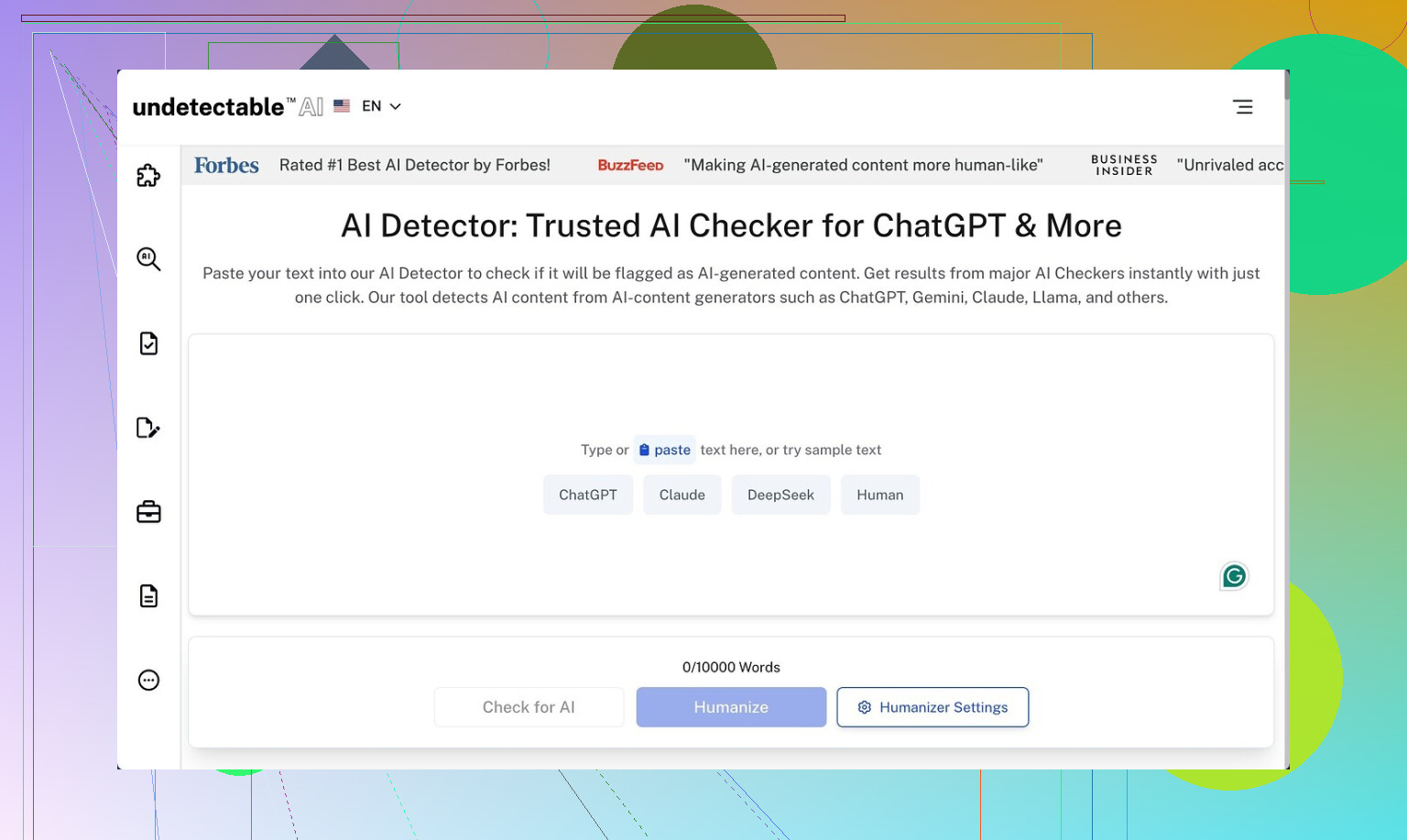

Undetectable AI:

I went in expecting another useless “humanizer”. It did a bit better than that, but it has its own mess.

I only used the free Basic Public model. No paid stuff, no Stealth, no secret settings. Even with that limit, the detection scores were oddly low compared to others I tried.

Here is what I saw.

Detection stuff

I fed in straight GPT text that was getting flagged all over the place.

On the free “More Human” setting:

- ZeroGPT score dropped to around 10 percent AI

- GPTZero hovered around 40 percent AI

Those numbers beat a lot of paid tools I tested earlier that kept landing in the 60 to 80 percent AI range on the same input. So on “fooling detectors”, the public model did not fail completely.

They keep hinting that the paid versions unlock:

- Stealth and Undetectable models

- Five reading levels

- Nine “purpose” modes

- Intensity sliders

If the free tier already gets under the radar for some detectors, I assume the locked stuff pushes it even further. I did not pay to check, so take that as a guess, not a guarantee.

Writing quality, where it falls apart

The text it produced felt like something a person would write when they are trying way too hard to sound like a person.

On “More Human” mode, I would give it 5 out of 10 for quality.

Here is what kept showing up:

-

Forced first person everywhere

It kept shoving “I think”, “I believe”, “I feel”, “from my experience” into almost every other sentence. Even when the original text was neutral and objective, the rewritten version turned into a fake blog post.Example pattern I kept seeing:

- Input: “This method improves accuracy for most models.”

- Output: “From my experience, I feel this method improves accuracy for most models, and I think it helps people a lot.”

If you write academic, technical, or corporate content, this reads wrong fast.

-

Repetitive wording and light keyword stuffing

It tended to repeat key terms too often, almost like old SEO writing.You might get:

- “This AI detection tool helps you avoid AI detection tools so your AI content looks less like AI content to AI detectors.”

The sentences were not broken, but they did not feel sharp. I had to edit nearly every paragraph to clean that up.

-

Weird sentence fragments

Every few paragraphs it would drop a line that looked half finished.Stuff like:

- “A helpful option for people. Especially if they write a lot.”

This kind of thing is fine in chat or personal posts, but starts to look sloppy in anything formal.

“More Readable” mode

I switched to “More Readable” to see if it stopped throwing in all the “I think” and “in my opinion” junk.

It helped a little.

- Fewer forced first person lines

- Slightly smoother flow

- Less obvious repetition

Still not something I would paste straight into a client document or published article without editing. I had to:

- Remove fake personal phrases that did not match the tone

- Merge fragments into full sentences

- Trim repeated words and phrases

So if you plan to use it, think of it more like a rough draft fixer that needs a human pass afterward.

Pricing and limits

This is what I saw on their pricing when I checked:

- Starts around 9.50 dollars per month if you pay yearly

- Word limit on that entry plan sits near 20,000 words a month

So if you do a lot of long form content or multiple rewrites, you hit that cap faster than you expect.

Privacy, where I started to side-eye it

The part I did not like at all was the data collection in the policy.

They collect more than the usual “email and IP” stuff. It included:

- Income range

- Education level

That is a lot of personal profiling for a tool that edits text. For me, this is enough to use a burner email and avoid linking it to any main account.

Refund policy fine print

They wave around a money back guarantee, but the details are tight.

To get a refund you need to:

- Show that your text scored under 75 percent “human” within 30 days

Two problems with this:

- Detection tools do not agree with each other, so what they count as “75 percent human” is unclear.

- You have to prove their promise failed, which shifts all the work onto you.

So it is not a no-questions-asked refund. More like “prove it with numbers and hope support agrees”.

Who this might work for

From what I saw, it fits a narrow use case.

Makes some sense if:

- Your top priority is slipping past basic AI detectors on school or corporate systems

- You do not mind editing the output heavily

- You are okay with sharing some personal data and dealing with a fuzzy refund rule

I would not use it when:

- The text must be clean enough to publish without major edits

- You write in a professional or research tone where fake personal voice stands out

- Privacy is a concern

My workflow with it looked like this:

- Generate base text somewhere else

- Run it through Undetectable AI on “More Readable” instead of “More Human”

- Check detection scores with tools like ZeroGPT and GPTZero

- Manually edit:

- Delete forced first person lines

- Fix fragments

- Cut repetition

If you treat it as one step in a longer editing pipeline instead of a magic one click fix, it has some use. If you expect clean, ready to publish text, it will waste your time.

Used Undetectable AI on and off for about 4 months for blog posts and some “safe” test docs, so here is the straight answer.

-

On detection and penalties

– It did lower scores on ZeroGPT and GPTZero, similar to what @mikeappsreviewer saw, but not consistently.

– Same text, different week, different detector update, and the “human” score changed a lot.

– Anything important for school or compliance, I would not trust any humanizer long term. Detectors keep changing, and tools lag behind.

– I never got a penalty, but I also never used it on stuff tied to my real name or grades. You are taking a risk if you do. -

Long term use

My pattern over time:

Month 1: “Nice, this kind of works.”

Month 2: “Why is this suddenly flagging again in one tool.”

Month 3–4: “I spend more time fixing its quirks than if I rewrote by hand.”

It did not stay “undetectable” in a stable way. It helped some weeks, then felt useless others.

- Quality issues I hit

I agree with the forced first person and repetition comments, but I saw a few different problems than @mikeappsreviewer:

– Tone drift. I fed in formal content and got casual, bloggy lines even when I did not pick “More Human”.

– Topic dilution. Short, focused paragraphs turned into longer ones that wandered off the main point. Good for detection maybe, bad for clarity.

– Over-simplification. Technical stuff lost precision, and that is a big red flag in any expert niche.

If your goal is clean, on-brand content, it adds work. I stopped trusting it on anything niche or technical.

- Risk vs reward

If your only goal is to get past basic filters in low-stakes environments, it might help.

If your goal is:

– long term safe publishing

– reputation work

– academic or corporate material

then the risk is not worth it. Especially now that many tools focus more on style and inconsistency than word patterns.

-

Alternative approach that worked better for me

What worked more reliably over time:

– Generate a short outline with AI.

– Write the draft yourself from the outline.

– If you use any humanizer, use it lightly on small sections and then edit like a hawk.

This keeps your voice, and detection tools have a harder time flagging it. -

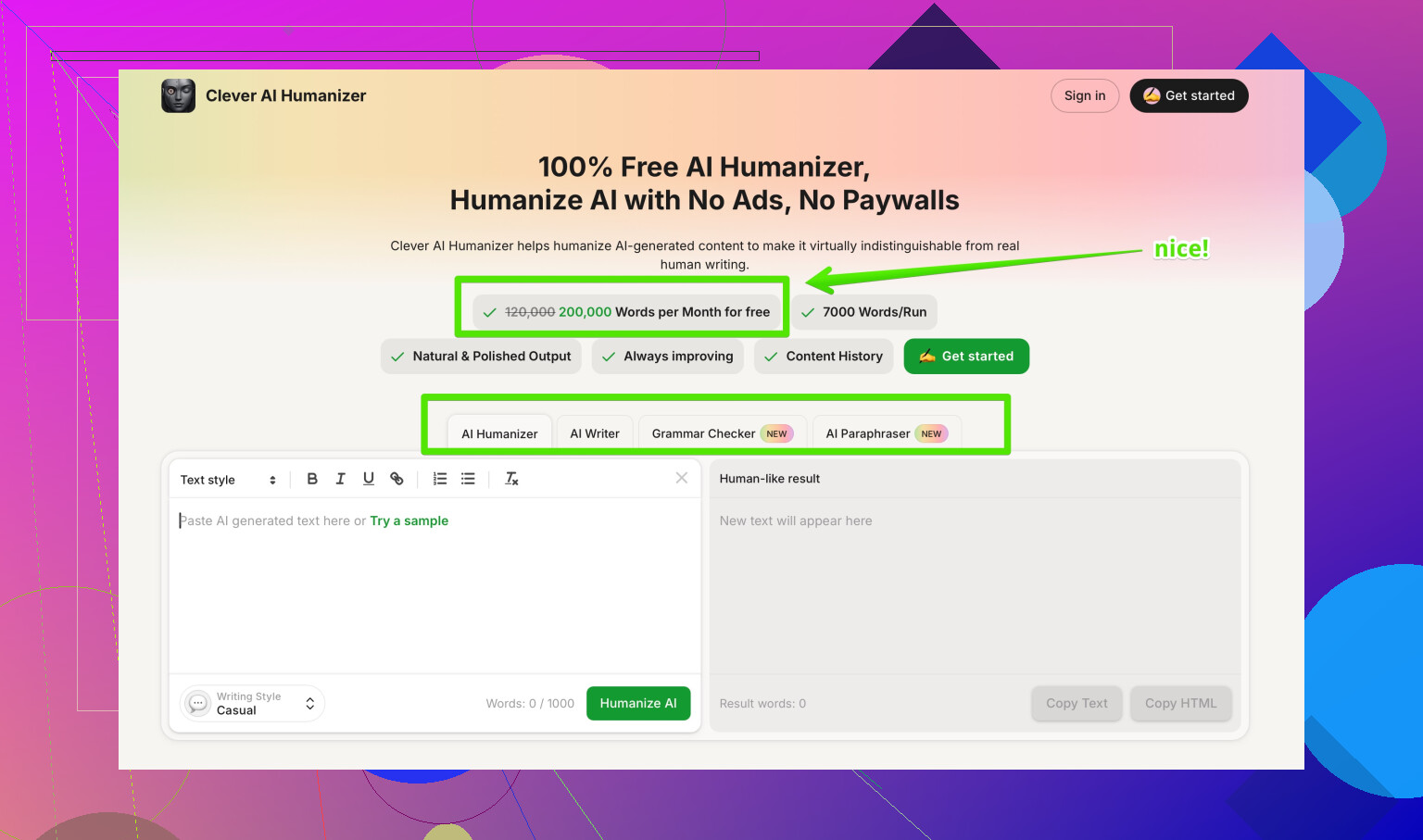

About Clever AI Humanizer

If you want to test a different tool, I got better consistency from Clever AI Humanizer. It focuses more on natural sentence variety and user control over tone instead of pumping in fake “I think” lines.

You can check it here for more options on more natural AI rewriting:

make your AI content sound more human and natural

Still, same warning. Treat any humanizer as a helper, not a shield. You need to edit manually, keep your own style, and avoid pushing pure AI content into high risk places.

Used it for about 3 months on client blogs and some throwaway “test” essays, so here’s the unvarnished version.

Short answer: it can drop detector scores, but it absolutely does not stay reliably “undetectable,” and it introduces its own problems that you eventually get sick of fixing.

I saw results similar to what @mikeappsreviewer and @suenodelbosque described, but a bit less impressive:

- ZeroGPT: usually went from 90–100% AI down to ~20–40% AI

- GPTZero: sometimes improved, sometimes barely moved

- Other detectors: totally random, especially when they updated their models

So if your whole plan is “I’ll run it through Undetectable AI and I’m safe forever,” that’s fantasy. Detectors change, your “humanized” text does not.

Where I disagree slightly with them: I actually found the “More Human” mode borderline unusable for anything professional. The fake personal voice and bloated sentences are not just annoying, they’re a tell. Teachers and editors are catching onto that over-hedged “I personally feel that this might be helpful for many people” style. It screams “AI trying to sound like a person.”

A few specific issues I hit that haven’t been emphasized yet:

-

Stylistic fingerprints

After a while you start seeing the same transition phrases and rhythm. If you use it across multiple docs tied to the same identity, it creates a weird, artificial “voice” that doesn’t match how you actually write. That’s risky if someone compares past writing samples. -

Inconsistent paragraph logic

It often preserves sentence-level grammar but scrambles the logic flow:- Premises out of order

- Conclusions repeated twice

- Definitions moved far away from where they’re used

For casual content it’s fine. For academic / legal / corporate, it’s a mess.

-

“Humanized” but obviously machine-processed

Yes, it may pass some basic detectors, but anyone who reads a lot of real human writing will notice:- Over-explaining trivial points

- Weirdly generic examples

- Overly smoothed-out tone with no sharp edges or real opinion

That combo is exactly what newer, behavior-based detectors look for.

On penalties / long-term safety

I never got directly penalized, but I also never used it on anything graded, regulated, or tied to my real employer. The real risk is not just “AI detector says 100% AI.” It’s:

- Instructor notices your writing style suddenly mutates

- Editor sees multiple pieces from you that all have the same odd cadence

- Company plagiarism / compliance checks flag “pattern anomalies,” not just raw AI probability

So if you’re thinking “I’ll use Undetectable AI for all my assignments,” that’s a long-term pattern that can bite you later even if a single essay slips past.

Where it actually can make sense

If you really insist on using a tool like this:

- Low stakes content: niche blogs, PBNs, disposable accounts, etc.

- Stuff you are willing to heavily edit and basically re-own as your own writing

- Situations where a slight reduction in AI probability is enough to avoid automated filters, not a forensic investigation

I’d personally avoid it for:

- Anything academic with honor codes

- Anything where your real name, license, or job is on the line

- Technical fields where precision is non-negotiable

Alternatives & better workflow

Instead of trying to brute-force “undetectable,” you’ll usually get a safer result with a mixed approach:

- Use AI for outlines, examples, and idea generation.

- Draft in your own voice, even if it’s messy.

- If you still want a rewrite tool, something like Clever AI Humanizer is less obsessed with gimmicky “I think / I feel” padding and more about natural variation and controllable tone. It still needs human editing, but the output doesn’t feel as forced.

- Final pass: tighten logic, fix tone, inject your own anecdotes or domain-specific details that generic models rarely hit.

On your “mixed results” concern

That is the long-term story. It works, then a detector update hits, and suddenly it’s not as “stealth” anymore. You end up chasing a moving target while your content quality degrades. At some point, manually rewriting a paragraph from an AI draft is faster and safer than trying to game the detectors.

Also, if you want to see how different tools and people are approaching this whole “humanizer” thing, check out threads like

deep dives into the best AI humanizers discussed on Reddit.

It’s messy, opinions all over the place, but you’ll notice a recurring theme: no tool is a perfect invisibility cloak.

So: Undetectable AI can sometimes help you slip past basic checks, but it’s not a long-term shield, and it introduces enough quirks that you really have to ask if the risk and cleanup are worth it.