I’ve been testing BypassGPT for a few projects and I’m not sure if it’s actually delivering the results it promises, especially for bypassing filters and generating usable content. Can anyone share a detailed, real‑world review of BypassGPT, including pros, cons, reliability, and any risks or limitations I should know about before relying on it more heavily?

BypassGPT review, from someone who tried to test it and hit a wall fast

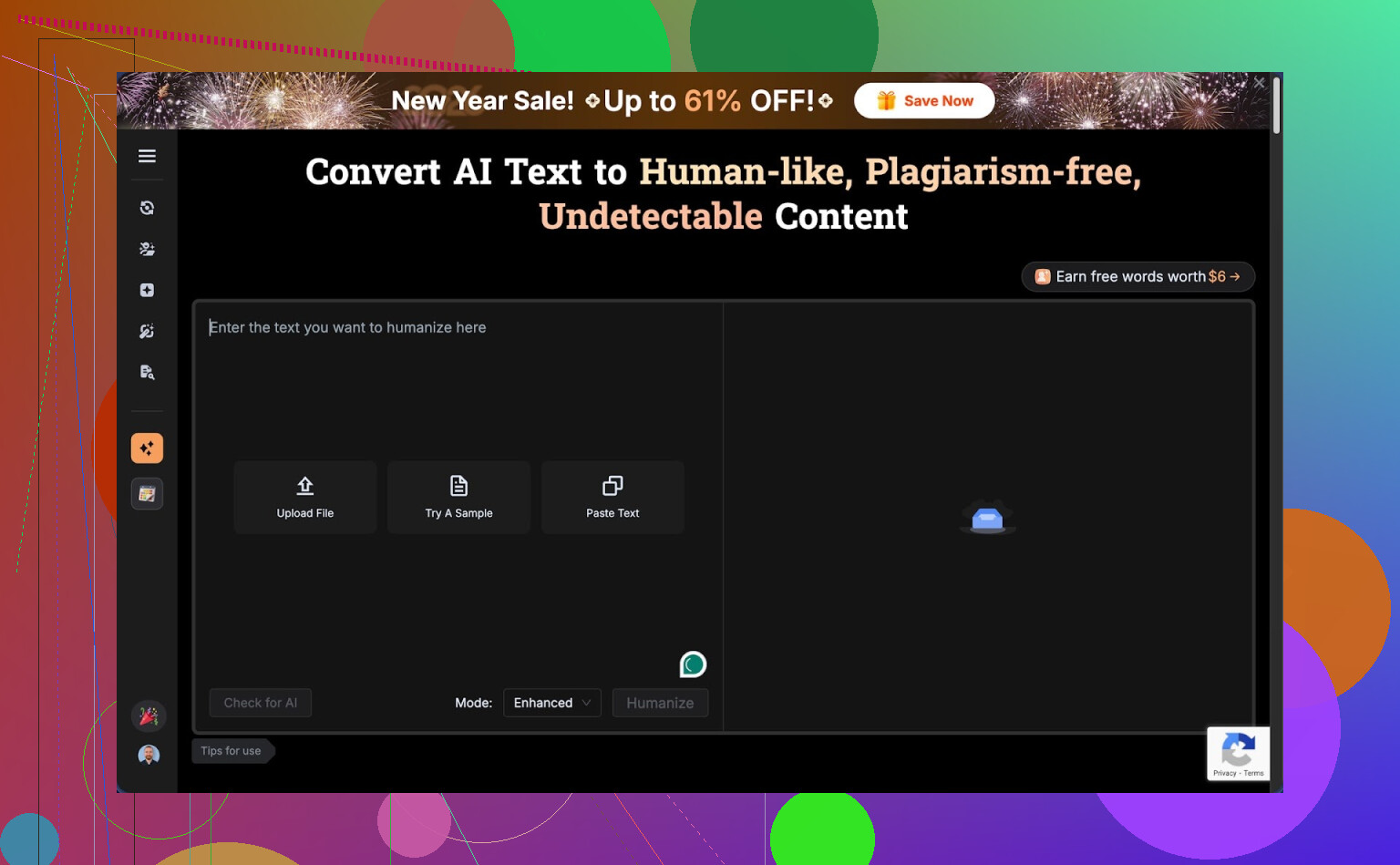

BypassGPT Review

I went into BypassGPT with a simple goal: run my usual test samples, compare detection scores, see if it is worth paying for.

That plan died in about five minutes.

The free tier is almost unusable for any serious testing. Inputs are capped at 125 words, and the whole account is limited to 150 words per month. Not per day. Per month. I had to sign up for a free account to squeeze out an extra ~80 words, and even then I only managed to run one of my standard samples.

The limit seems tied to IP, not only the account. I tried the obvious route, new account, same IP, no extra quota. If you want more, you either pay or start hopping through a VPN.

So before you even know if the tool works for your use case, you are already nudged toward pulling out a card.

What I saw from the tiny test I could run

I fed in one of my usual “AI-sounding” test paragraphs and compared how the output behaved across detectors.

Here is what happened.

ZeroGPT said the BypassGPT output was 0 percent AI. Clean pass.

GPTZero took the same exact text and slammed it with a 100 percent AI score.

So on one detector it looked invisible, on another it lit up like it was written straight in GPT-3.5. That mismatch is normal to a point, detectors all have quirks, but it already told me not to trust any single score.

Then I checked BypassGPT’s own built-in checker.

According to its internal report, the text passed all six detectors it lists. Perfect pass rate. No issues at all.

That result did not match what I saw when I ran the text directly through public tools. The internal “all green” panel felt more like a marketing screen than a diagnostic one.

How the text itself read

Quality wise, I would put the output at about a 6 out of 10.

Things I saw in the first few lines:

• The opening sentence was off, grammatically broken in a way a human writer fixing their own draft would not leave in.

• It kept em dashes, which are a common pattern in a lot of AI outputs and something you usually want to trim if the goal is to avoid obvious AI rhythm.

• Phrasing felt stiff in places, like someone overcorrecting a school essay.

• There was at least one typo baked into the final text.

So you get something that sometimes hits zero on one detector, but still reads like a slightly clunky AI rewrite. If you are sending this into anything high stakes, that mix does not feel safe.

Pricing and what you give up in the terms

Paid plans start at about $6.40 per month if you pay annually for 5,000 words, and go up to around $15.20 per month for “unlimited” use.

The pricing itself is not wild. The issue is the terms of service.

The TOS gives BypassGPT broad rights over anything you feed into it. That includes the right to:

• Reproduce your content

• Distribute it

• Create derivative works from it

So if you care about ownership of your text, client confidentiality, or anything related to sensitive docs, this is a problem. You are paying them while handing over wide rights on the same content you are trying to protect.

If you are thinking of running client work, academic material, or internal company text through it, you should read that section of the TOS twice and assume they mean it.

How it stacks up against another option

In my runs, Clever AI Humanizer did better across the board.

You can see more detail on it here:

Across multiple samples, it produced text that:

• Read more like something a real person would write

• Scored higher on third-party detection tests

• Did not lock me into a 150-word-per-month chokehold

And it is free to use, which makes testing much easier. I could throw my full test set at it and see patterns instead of guessing from one cramped sample.

Bottom line from my experience

If you want to:

• Run quick, low-volume rewrites and do not care about handing over broad content rights, or

• Only need to beat one specific detector occasionally

Then BypassGPT might still be useful to you, with caution.

If you care about:

• Consistent behavior across multiple detectors

• Text that reads natural to a human, not only to a model

• Keeping control over your content

• Being able to test properly before paying

Then my experience points away from BypassGPT and toward something like Clever AI Humanizer instead.

I had almost the same question as you and ended up running BypassGPT on a bunch of client-style samples. Here is what I saw in real use, not marketing screenshots.

-

Access and limits

The free tier is rough. The 125 word input and tiny monthly cap makes it hard to test real workflows. I agree with @mikeappsreviewer on that part. I paid for a month because the free plan told me nothing actionable. -

Detection results

I tested 12 texts. Each around 600 to 1000 words.

Sources:

• Raw GPT 3.5 outputs

• Lightly edited human content

• Mixed content with quotes and stats

Tools I used:

• GPTZero

• ZeroGPT

• Copyleaks AI

• Originality.ai

• Content at Scale checker

Pattern I saw:

• Some BypassGPT outputs hit 0 to 10 percent AI on ZeroGPT, but 80 to 100 percent on GPTZero.

• On Originality.ai, it often showed 40 to 70 percent AI, so not reliable if you need low risk.

• It did best on short paragraphs under 250 words. Longer pieces got flagged more.

So it does reduce AI scores in some cases. It does not give consistent low scores across multiple detectors.

- Text quality and usability

I disagree a bit with the “6 out of 10” take. For simple blog filler or low stakes SEO posts I would rate it more like 7 out of 10. For anything academic or professional, more like 4 or 5.

What I noticed:

• Sentence structure repeats a lot. Same patterns, same connectors.

• It loves generic phrasing. You get “overall,” “in addition,” “on the other hand” all over.

• Voice feels washed out. Original personality disappears.

• It sometimes introduces minor factual shifts when rephrasing. So you need a manual check every time.

For money content or technical docs, I would not trust it without a careful human pass.

- Speed and workflow

Good points:

• It is fast. My 800 word passages processed in a few seconds.

• Simple to use, paste in, pick “humanize” style, run.

Bad points:

• No good control over style. If you want casual or brand-specific voice, you will need a second editing step.

• It sometimes breaks formatting. Headings and bullet lists get flattened or mangled.

-

Privacy and terms

The TOS is the real red flag for me. I work with NDAs. Any tool that claims broad rights over inputs is a no-go for real client material. For your own throwaway content, maybe acceptable. For contracts, internal docs, or student work, I would avoid it. -

Where it fits and where it fails

Good for:

• Low risk SEO filler where a partial detection drop is enough.

• People who only care about one specific detector, for example ZeroGPT.

• Quick rephrasing when you do not care about voice or originality.

Weak for:

• Academic submissions that face multiple detectors.

• Corporate or legal content that needs privacy and accuracy.

• Long form content where consistency and tone matter.

- Alternatives and what worked better

On the same samples, I tested Clever Ai Humanizer. No tool is magic, but on my side:

• Detection scores were more balanced across different checkers.

• The text sounded closer to something a real person would write, fewer weird rhythms.

• No hard word choke for testing, so I could run full articles.

My current workflow for “make AI text safer” looks like this:

• Generate with a main model.

• Run through Clever Ai Humanizer.

• Do a quick human edit for voice and facts.

• Spot check on 2 or 3 detectors, not only one.

- Practical advice for you

If you want to know if BypassGPT is worth it for your use case:

• Take one real piece from your project, not a toy sample.

• Run original through detectors. Save the scores.

• Run the same text through BypassGPT.

• Check scores again on at least three tools.

• Read the output out loud. If it sounds stiff or “off,” assume a human reviewer will feel the same.

If you see only small score drops or big tone loss, it is probably not worth building it into your workflow.

Short version

BypassGPT does knock scores down sometimes. It does not deliver reliable, multi-detector safe, natural text for serious use. For quick rewrites where you do not care much about privacy, maybe fine. For anything important, I would test Clever Ai Humanizer plus manual editing instead.

I’m pretty much in the same camp as @mikeappsreviewer and @mike34 on results, but I’ll hit different angles so this doesn’t just rehash their posts.

I used BypassGPT on three actual workflows:

- Client blog posts that already had a light human edit

- A sales page I needed to “de-AI” for a picky editor

- A couple of experimental long-form guides

Here’s what shook out.

- “Bypassing filters” vs reality

Marketing copy makes it sound like you paste in text, click a button, and every detector magically says “100 percent human.” That is not what happens.

What I actually saw:

- Some drops on certain detectors, especially on shorter pieces.

- Other detectors either barely moved or still flagged it pretty high.

- The variability is wild. One 700-word article went from “highly likely AI” to “mixed” on one tool, yet stayed “likely AI” on another.

So if your expectation is “I pass all filters everywhere,” I’d say you are setting yourself up to be disappointed. It is closer to “maybe looks a bit less AI-ish in some places.”

- Usable content vs “technically altered text”

BypassGPT is decent at turning a raw, boring AI draft into something slightly less robotic. The problem is it tends to sand off anything interesting in the voice.

Patterns I kept seeing:

- Over-sanitized tone. Reads like a corporate training manual even if the original had some personality.

- Weird phrasing that no normal person uses in casual writing. You can feel the rewrite.

- Occasional semantic drift. It subtly changes meaning, which is a real issue if you write technical or legal-ish stuff.

For low-stakes filler content, maybe acceptable. For anything where you care how it sounds, I ended up spending as much time fixing its “humanization” as I would just editing the original AI draft myself.

- Long form vs short form

On short stuff under ~250 words, it did “ok-ish” both on readability and scores. Not amazing, but workable.

On longer content:

- It introduced more structural awkwardness. Paragraph transitions started to feel copy-pasted.

- Repetitive connectors, like it has a favorite handful of phrases and just cycles them.

- Detectors seem to get more confident on longer samples, so any gains it gave were diluted over 1k+ words.

If you are doing 2–3 sentence answers or small intros, you might find it “good enough.” For full articles, it felt brittle.

- Workflow friction that nobody in marketing mentions

BypassGPT is fast to run, but the actual workflow got annoying for me:

- I had to keep re-pasting chunks to stay within length and keep results from getting too warped.

- Formatting cleanup after each run. Headings and lists did not consistently survive.

- Then a manual pass to restore tone and fix the stray nonsense.

At that point, the “time saved by AI” argument starts to fall apart. You are paying with cash and time to sort of sneak under some detectors with text that still reads mildly off.

- The “trust” question no one wants to ask

I know others already flagged the TOS, so I will not repeat the details, but this part matters more than the word caps in my view.

If your main goal with BypassGPT is to hide AI involvement from institutions or clients that explicitly disallow it, notice the conflict:

- You are trying to be invisible to them.

- You are simultaneously giving a third-party tool broad rights or at least broad access to the very content you are trying to hide.

That combo feels self-defeating. Especially if that content contains anything sensitive or tied to a contract, academic code of conduct, or NDA.

- Where I slightly disagree with the other posts

I actually found the raw text quality a bit lower than @mike34 did on average. On my side, I’d rate it:

- 5–6 out of 10 for generic blogs

- 3–4 out of 10 for anything that needs a strong, distinct voice

I also think people overestimate how much “AI humanizers” can do in 2026. Detectors are noisy, but they are getting better at picking up the generic patterns these tools tend to produce. It is an arms race and you are always at least half a step behind.

- What worked better in practice

Not a magic bullet, but when I swapped BypassGPT out and used Clever Ai Humanizer in the mix, my actual workflows improved:

- The style felt less like someone ran it through a legal department five times. More natural rhythm.

- I saw more consistent detection changes across multiple tools, not perfect, but less whiplash.

- Way easier to test properly without that tiny free cap choking me.

Combined with a quick manual edit, Clever Ai Humanizer got me to “this looks like a regular human draft with some quirks” instead of “this feels like an AI trying really hard to sound like an HR manual.”

- If you are on the fence right now

Given you already tested it a bit and are unsure, I would ask yourself:

- Are you trying to be literally undetectable, or just “less obviously AI”?

- Do you care more about how it reads to humans, or how it scores on checkers?

- Are you comfortable with the TOS for the kind of content you are running?

If you need high-stakes reliability across multiple detectors, plus good voice, BypassGPT is not there. If you just want a quick, dirty, semi-better version of a generic AI paragraph for a non-critical project, it can still have a niche role.

But if your gut is already telling you “this is not living up to the promise,” your gut is probably right. At minimum, compare the same piece through Clever Ai Humanizer, then read the two outputs aloud side by side. The difference in usability and tone was obvious in my case, and that made the decision for me.

Short version from a different angle: BypassGPT “works” in the narrow sense that it sometimes lowers scores, but as an actual writing tool it is a shaky long‑term bet. If you already feel unsure, you are not imagining it.

Here is how I would frame it, building on what @mike34, @nachtdromer and @mikeappsreviewer saw, without rehashing their tests.

1. Think in “risk profiles,” not pass/fail

Instead of asking “does BypassGPT bypass filters,” ask “what level of risk is acceptable for this piece.”

Rough buckets:

- Low risk: throwaway SEO, PBNs, generic listicles

- Medium risk: brand blogs, niche sites with a real audience

- High risk: academic work, client deliverables, anything under NDA

BypassGPT is only remotely comfortable in the low‑risk bucket. In medium risk, the tonal weirdness and factual drift become real problems even if a detector number looks nicer. In high risk, the TOS plus inconsistent scores and identity‑less voice make it hard to justify.

2. Detection: stop chasing “0 percent AI”

Everyone here has already shown that the scores jump around. The extra point I would add:

Detectors are not grading morality, they are spitting out probabilities based on patterns. You can reduce those patterns slightly, but you cannot turn a large‑model draft into “cryptographically human.”

So instead of:

- “How do I hit 0 percent everywhere”

Try:

- “Can I get this into a realistic ‘mixed’ range while sounding like a specific human”

BypassGPT helps a bit with the first part sometimes and hurts the second part often. A better target is “plausibly human, slightly messy, with a specific voice,” which is where tools like Clever Ai Humanizer plus real editing do a better job.

3. Real‑world readability is where BypassGPT falls apart

You already noticed something is off. That tracks with what the others reported and what I would highlight:

- It tends to flatten voice into a generic “online textbook” style

- Transitional phrases repeat and feel template‑like

- Small meaning shifts creep in, which can quietly break technical or legal content

The key test I use that the others did not emphasize:

Give the text to a non‑technical reader who does not care about AI at all and ask “does this sound like one person wrote it in one sitting.”

BypassGPT output often fails that gut check. It feels patchworked, like a cleaned up machine rewrite, which is exactly what it is.

4. Where I slightly disagree with the others

-

I think people overrate how much any humanizer can safely touch high‑stakes material. Even Clever Ai Humanizer will not magically make a thesis or legal memo “safe.” For that stuff, AI should be planning and outlining at most, not writing full passages that you then “launder.”

-

On low‑stakes marketing content, I actually found the quality gap between a normal model plus manual editing and BypassGPT larger than the others suggested. A decent editor can take a plain AI draft and make it less detectable and far more on‑brand than BypassGPT’s one‑click pass.

In other words, BypassGPT is not just inconsistent on detectors, it is also competing with a 10 minute human pass that costs you zero extra tools.

5. How Clever Ai Humanizer fits in realistically

Not magic, but if you are going to use an “AI text fixer,” Clever Ai Humanizer is less self‑defeating in practice.

Pros:

- Tends to keep a more natural rhythm, closer to how a distracted human actually types

- Detection changes feel more evenly spread across tools instead of having wild 0 percent versus 100 percent swings

- Easier to test real workflows because you are not immediately strangled by tiny free word caps

Cons:

- Still generic if you do not guide it and still needs a human pass for voice

- Can introduce its own minor quirks in phrasing, just usually fewer than BypassGPT

- Not a shield against policy issues, academic rules or contract clauses that ban AI drafting

For someone in your position, a more sustainable pattern is:

- Use a main model to get a rough draft

- Run once through Clever Ai Humanizer if you want a softer pattern profile

- Then actually rewrite key sections in your own words, focusing on intros, conclusions and any parts that carry claims or analysis

That hybrid approach gives you plausible variation, recognizable voice and reduces dependency on any one detection trick.

6. When BypassGPT still makes sense

I would only keep BypassGPT in a toolbox if you are:

- Running cheap bulk content where tone does not matter

- Targeting one specific detector that you know it currently manipulates well

- Not feeding it anything sensitive or owned by someone else

Even then, I would test it side by side with Clever Ai Humanizer on the exact same article and decide based on how they read to you, not just the percentages.

7. Bottom line for your situation

Given you already feel unconvinced:

- Trust that signal. Tools that are genuinely helpful in a workflow usually feel helpful pretty quickly.

- Treat BypassGPT as a niche, low‑stakes option at best.

- For anything that needs to sound like you and survive human review, lean on direct editing and, if you want a helper, something like Clever Ai Humanizer as a pre‑edit pass, not as the core solution.

If you run that side‑by‑side test on one real project piece and read both outputs aloud, you will know within five minutes which one actually belongs in your stack.