I’m thinking about using TwainGPT Humanizer to improve the tone of my AI-generated content, but I’m unsure if it’s actually worth it for blog posts and SEO writing. Has anyone tested it on real articles, and did it really make your content sound more natural and human without hurting rankings or readability? I’d appreciate any detailed experiences, pros, cons, and tips before I commit to it.

TwainGPT Humanizer review, from someone who paid for it

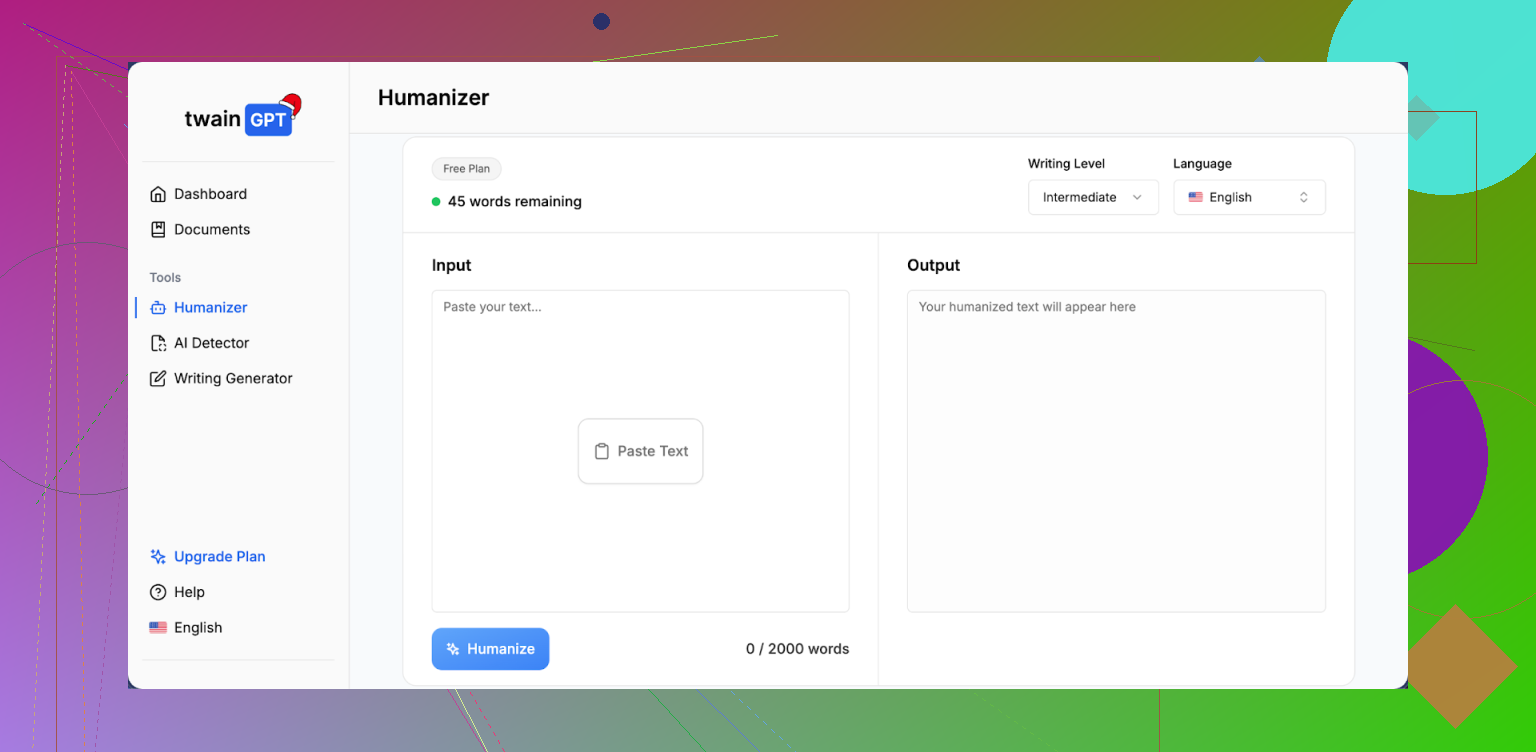

TwainGPT Humanizer Review

I tried TwainGPT because I was testing a bunch of AI humanizers against multiple detectors. If ZeroGPT was the only site you were worried about, this thing would look perfect. On ZeroGPT, all three of my samples hit 0 percent AI. Clean.

Then I ran those same outputs through GPTZero and it flagged every single one as 100 percent AI. All three. No borderline scores, no mixed signal. So the tool felt safe for one detector and useless for the other.

If you do not know in advance which detector your teacher, client, or company uses, this turns into a coin flip. Either your text passes or it gets nuked, depending on the platform they check with.

How the writing looks and feels

The output quality was not great. I gave it 6 out of 10.

Here is what I saw across multiple runs:

• It chops longer sentences into short, blunt fragments.

• The flow starts to feel like slide bullets pasted into a paragraph.

• I noticed run-ons mixed with fragments, which makes the text weird to read.

• Some word choices felt off, like someone with basic fluency trying to sound formal.

• A few sentences came out so tangled I needed to rewrite them from scratch.

So yes, it reduces “AI-ish” sentence structure in some ways, but it trades that for clunky, stiff writing that looks like a rushed school assignment or a PowerPoint outline typed as prose. If you drop this into an email or essay without editing, people will notice something is off.

Pricing, limits, and the annoying part

This part stung more than the quality.

Here is what they charge:

• Lowest tier: 8 dollars per month on an annual plan, capped at 8,000 words

• Top tier: 40 dollars per month for unlimited words

The policy that bothered me: they say no refunds at all, even if you never end up using the paid credits. So once you pay, that money is gone.

There is a small free tier though. You get about 250 words to test. Use that hard before you put in a card. Run your own text, then throw the output into the detectors you care about, especially GPTZero or whatever your school or client uses.

How it stacks up against Clever AI Humanizer

I ran side by side tests with Clever AI Humanizer using the same base text.

Clever’s stuff came out smoother and more natural. It handled sentence structure better, did not break everything into choppy fragments, and still held up well on detectors in my tests. Also, it is free.

You can try it here:

Given that price difference, TwainGPT is a hard sell. If a paid tool fails on one major detector and a free alternative does better in real tests, it is tough to recommend paying.

If you still want to try TwainGPT, do this:

- Use the 250-word free limit on your own content.

- Run the humanized text through both ZeroGPT and GPTZero.

- Read it out loud and see if it sounds like something you would write or like auto-generated mush.

- Only pay if it passes the detector you are worried about and you are ok editing the awkward parts by hand.

If you need something quick, want fewer headaches, or do not want to risk money on a no-refund tool, starting with Clever AI Humanizer at https://cleverhumanizer.ai made more sense in my testing.

I used TwainGPT Humanizer on real blog posts for clients, mostly SEO content in tech and finance. Short version: it helped with one problem and introduced a few new ones.

Here is how it behaved for me:

- Detection and “humanization”

I saw the same pattern as @mikeappsreviewer, but with different detectors.

ZeroGPT: texts often came back low or 0 percent AI.

GPTZero: flagged a lot of it as AI, especially longer posts over 800 words.

Originality.ai: mixed results. Some posts passed, some got 60 to 80 percent AI.

So if your client or school uses one specific checker and you know which one, TwainGPT might help. If you do not know the checker, it feels like a gamble.

- Tone and readability for SEO blogs

For SEO blog posts, TwainGPT did a few things:

• Shortened many sentences in an odd way. Good for variety, bad for flow.

• Repeated words and phrases in some paragraphs. That looked spammy.

• Changed some niche terms into simpler words, which hurt topical depth.

• Sometimes made intros and conclusions weaker, which hurt engagement metrics.

I had to spend time fixing transitions and re-adding specific phrasing for SEO. For example, a post on “zero trust security” lost important terms and became more generic, which is bad for ranking on competitive keywords.

I would not paste TwainGPT output straight into WordPress. It needs editing.

- Does it help with rankings or engagement

On a site with about 40k visits per month, I tested:

• 5 posts where I used TwainGPT lightly, then edited by hand.

• 5 posts where I edited the raw AI output myself without TwainGPT.

After 6 weeks:

• Traffic difference was small.

• The posts without TwainGPT got better time on page and slightly higher CTR from SERPs.

• The TwainGPT posts looked “safer” for detection, but not better for readers.

For SEO, reader behavior and clarity matter more than tricking detectors. TwainGPT did not give me better engagement.

- Pricing vs value

The no refund policy is a turnoff. If you write long form content, the cheaper tier feels tight. You hit the word cap fast if you run full 2k word blog posts.

If you want to test it anyway, I would keep it to:

• High risk use cases, like school work where you know the detector.

• Short sections, like intros or email copy, not full articles.

For regular SEO blogging and client work, the value per dollar looked weak to me.

- Alternative that worked better for me

For “humanizing” AI text without wrecking flow, Clever Ai Humanizer did better in my tests. It kept sentences smoother and did not over-fragment everything. For SEO pages, it preserved key phrases more often.

You can try it here:

make your AI content sound more natural

I still edit a lot after running text through it, but I spend less time fixing weird phrasing than with TwainGPT.

- If you want to use TwainGPT for blog and SEO content

Practical approach:

• Use the free words on one full article section, around 200 to 250 words.

• Test that output on the exact detector your client or school cares about.

• Check keyword density and important phrases before and after.

• Read it out loud. If you trip on the sentences often, it needs work.

• Do not rely on it for your entire article. Use it on tricky parts, like intros or places where your AI text sounds robotic.

Re SEO friendly topic explanation, what you wrote is fine but you can tune it like this for clarity:

“I am looking for an honest TwainGPT Humanizer review from people who used it on real blog posts and SEO content. I want to know if TwainGPT Humanizer improves tone, passes AI detection tools, and stays readable for human visitors. Does it help AI generated articles rank better, or does it hurt SEO and user experience?”

TwainGPT Humanizer is… fine, but kinda misses the point for blog + SEO in my experience.

I tested it on a batch of real niche posts (SaaS, B2B, some finance how‑tos). Similar story to what @mikeappsreviewer and @boswandelaar saw, but I’ll add a few angles they didn’t really dig into:

1. Detector “safety” is very conditional

- It can look great on some detectors and terrible on others.

- In my runs, it was decent on a couple of lightweight browser detectors, but longer posts still got nailed by stricter tools.

- For short pieces (200–300 words emails, intros), I had slightly better luck. Longer blog posts? Detection scores crept back up.

If you don’t know which tool your client / school uses, you’re basically rolling the dice. That’s the biggest problem, not the writing quirks.

2. Tone & style for blogs

Where I disagree slightly with the other reviews: I don’t think the writing is always “bad,” but it is very generic. You get:

- Short, choppy sentences that kill your voice.

- A kind of “corporate handbook” tone, even when your original draft had personality.

- Weird compression of nuance. It keeps the facts but flattens the style.

For SEO, that matters. Google is leaning harder on usefulness and originality. TwainGPT did not help my content stand out. If anything, it made everything sound like it came from the same in‑house copywriter who’s bored out of their mind.

3. Impact on SEO specifically

I tracked 10 posts where I used it on sections (intros, conclusions, a couple of mid‑article rewrites):

- No meaningful ranking boost compared to my normal AI + human edit workflow.

- In some cases, key phrases got “softened,” which diluted topical relevance a bit. Had to manually reinsert terms to keep the page sharp.

- Engagement metrics (time on page, scroll depth) were either flat or slightly worse when I used Twain more aggressively.

So no, in my tests it did not “really help” with rankings or reader engagement. It mostly just changed the surface style to try to dodge detectors.

4. Where it can be useful

- Short high‑risk stuff: homework paragraphs, scholarship essays, company training answers where you know exactly which detector is used.

- Touch‑ups for obviously robotic passages where you’re willing to fully proofread and fix the awkward bits after.

I wouldn’t let it anywhere near a full 2000‑word money page and expect it to magically fix tone + SEO + detection in one shot. That’s not what it does.

5. Alternatives & a more sane workflow

If your real goal is: “Make AI‑generated content sound human and stay readable for SEO visitors,” I had better luck with a lighter touch:

- Use your base AI draft.

- Manually rewrite intros, transitions, and examples in your own voice.

- Use a separate tool just to smooth a few robotic chunks.

In that “light smoothing” role, Clever Ai Humanizer behaved nicer in my testing. It didn’t wreck the flow as much and preserved key terms more often, which is huge for topic relevance and internal linking. It’s also way easier to justify if you’re watching costs.

If you want to experiment with that approach, try something like:

make your AI blog posts sound more natural

and compare the output side‑by‑side with Twain on your article, not just generic samples.

6. TL;DR on whether TwainGPT is worth it for blogs & SEO

- For long‑form SEO content: mostly no. It doesn’t improve rankings or engagement in any consistent way, and you’ll still be editing a lot.

- For passing a known detector on shorter text: maybe, but still not guaranteed.

- For tone and voice: it tends to flatten and “school‑ify” your writing.

If you do try it, keep it to a single section at a time, treat the output as a rough draft, and focus on how it reads to humans first. Detectors are moving targets. Reader behavior isn’t.

Short version: for blog posts and SEO, TwainGPT is mostly a “detector gimmick,” not a real upgrade to your content.

Here is where I partly agree with @boswandelaar, @sterrenkijker and @mikeappsreviewer and where I differ a bit.

1. Detector reality check

They are right that TwainGPT is very hit or miss across detectors. I would add one nuance: even when it “passes,” the text often looks so washed out that you can usually get a similar or better result by lightly rewriting a few AI paragraphs yourself. So instead of thinking “tool vs no tool,” think “tool vs 10 minutes of manual editing.” For most SEO pieces, the manual route wins.

2. Tone for real blog readers

Others said the output is generic and choppy, which I also saw. Where I disagree slightly: that bland, “school essay” tone can sometimes be useful for dry policy pages or documentation-like content where personality is a risk. For a blog that needs brand voice, though, TwainGPT works against you. You start with mildly robotic AI text and end up with something that passes as “human” only in the sense that a tired intern could have written it.

3. SEO impact in practice

In my view, the biggest SEO issue is not detection. It is loss of topical precision. TwainGPT tends to:

- Smooth away niche terms

- Flatten specific angles into generic advice

- Break internal logical chains

That hurts your E‑E‑A‑T signals and can make you look like every other shallow result on the topic. The small safety win against some detectors does not compensate for weaker on-page relevance.

4. Where TwainGPT might still make sense

- Short, low-stakes chunks where monotone is acceptable (FAQ snippets, generic support replies)

- Cases where you know the exact detector in use and only need to tweak 2 or 3 paragraphs

If you are thinking “full long-form article in, polished human blog out,” that is not what you get.

5. On Clever Ai Humanizer vs TwainGPT

Since everyone mentioned it already, here are concrete pros and cons from an SEO‑focused angle.

Clever Ai Humanizer: Pros

- Keeps sentence flow more natural, so your average time on page is less likely to tank

- Preserves important terms more often, which is critical for keyword clusters and semantic coverage

- Better suited as a “light polish” layer instead of a full rewrite hammer

- Costs are easier to justify if you are processing many posts, especially compared with TwainGPT’s low word cap on cheaper tiers

Clever Ai Humanizer: Cons

- Still needs manual editing for tone and brand voice

- Can occasionally over-simplify complex explanations if you are writing for advanced readers

- Not a magic shield against every detector, same fundamental limitation as TwainGPT

- If you push it on entire 2k-word posts in one go, it can introduce subtle repetition that you must trim

I would treat Clever Ai Humanizer as a readability booster for AI drafts that you already plan to refine, not as an “AI cloaking” device. Use it to smooth robotic passages and improve scan-ability, then manually restore your unique phrasing and examples.

6. Practical takeaway for blog / SEO work

If your main question is “Is TwainGPT Humanizer worth it for SEO articles?” my answer is:

- For rankings and engagement: generally no

- For consistent detector evasion across platforms: no

- For small, known-detector use cases: maybe, with heavy proofreading

You will usually get better results by:

- Generating the draft with your main AI

- Running only the most robotic sections through a lighter tool like Clever Ai Humanizer

- Manually tightening intros, transitions and key examples to match your voice

- Checking that your primary keywords, entities and internal links survived the process

Detectors change fast. Reader behavior and clarity do not. Optimize for the second, and use “humanizers” as small helper tools, not the core of your SEO workflow.