I’m trying to understand whether context free grammar (CFG) is really what powers modern grammar checkers or if it’s more about machine learning and statistical models. I’ve seen CFG used in formal language theory and compilers, but I’m not sure how it connects to tools like Grammarly or Word’s grammar checker. Can someone explain the relationship between CFG and practical grammar-checking systems, and whether learning CFG will actually help me build or understand a grammar checker

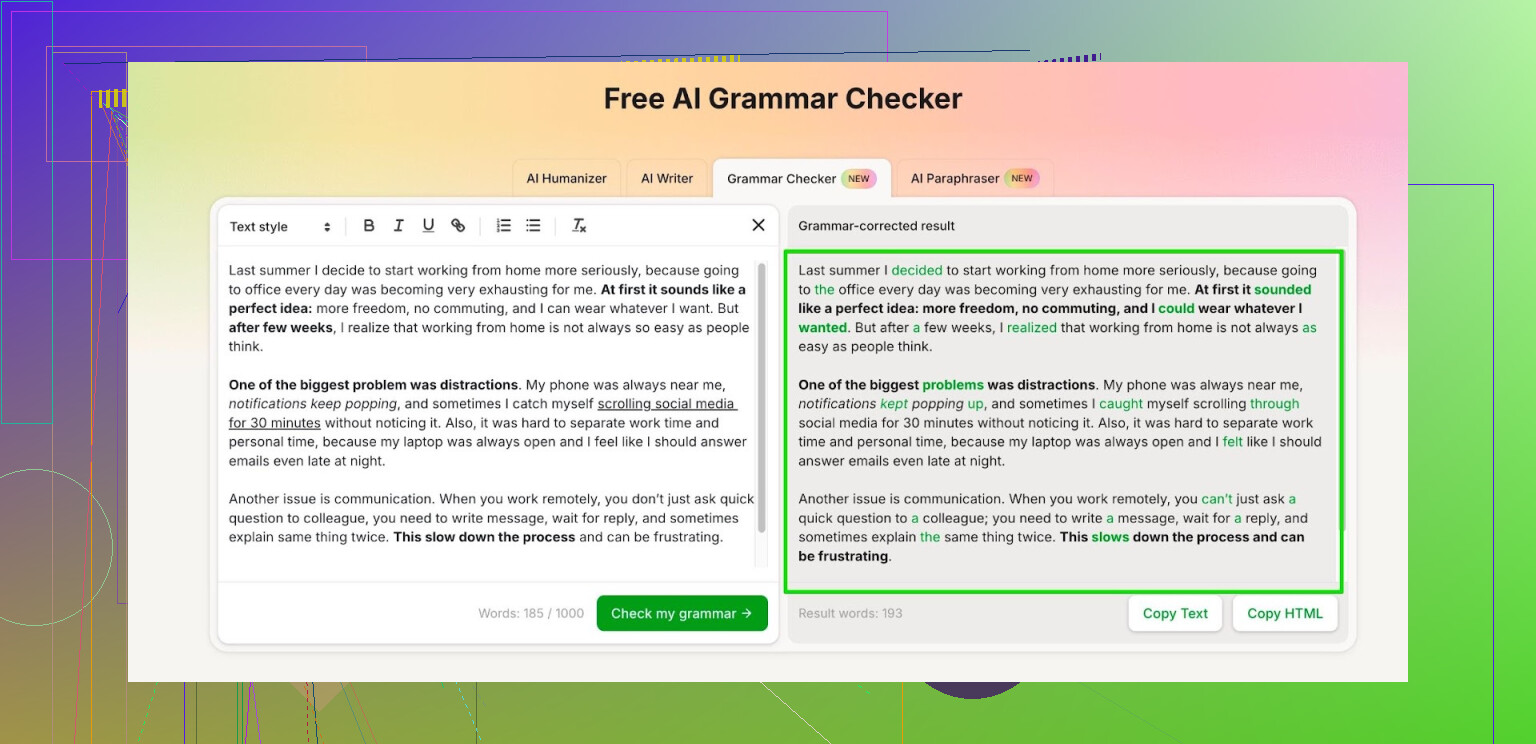

I got tired of grammar tools slowly turning into subscription traps. Grammarly, Quillbot, all of them start nice, then your text hits some hidden paywall and you need to start cutting paragraphs.

So I went hunting for something free that does not choke on longer texts.

What I ended up using most days is this:

Free AI Grammar Checker

Here is how it works for me:

- No account: it processes up to 1,000 words in one go.

- With an account: it allows up to 7,000 words per day.

For context, 7,000 words covers:

- A full school essay plus revisions.

- A long email thread or report for work.

- A chapter of a thesis or a blog post draft.

My routine looks like this:

- I write in Google Docs or Word without thinking about grammar.

- I copy the whole thing into the checker.

- I scan the suggestions and only accept the ones that match my tone.

- I paste the edited text back into my doc.

A few notes from actual use:

- It does fine with basic grammar, punctuation, and word order.

- It does not twist the text into corporate-speak, which I prefer.

- I still read everything once more before sending, because no tool catches context every time.

If you need a free option for school assignments, work emails, or reports, the daily limit feels enough as long as you are not trying to process a whole book in one day.

Short version. CFG matters for theory. Modern grammar checkers mostly run on machine learning plus some pattern rules, not on pure context free grammars.

Longer take, trying to keep it practical for you.

- What CFG is good at

CFGs describe structure of sentences. Things like:

S → NP VP

NP → Det N

VP → V NP

They are great for:

• Teaching syntax

• Building toy parsers

• Formal proofs in CS

They are bad at:

• Long distance agreement in real language

• Style and tone

• World knowledge, like knowing if a sentence makes sense

CFGs break quickly once you hit messy real‑life text, typos, weird punctuation, or complex clauses.

- What old school grammar checkers did

Early grammar checkers leaned more on:

• CFG‑like parsers or simpler phrase structure rules

• Hand written pattern rules

Example: “if you see ‘a’ before a word starting with a vowel sound, flag it”

That gave:

• Ok detection of obvious subject verb agreement

• Limited coverage

• Tons of false positives and negatives

Maintaining those rules was painful. Every new construction you want to support needs more rules, more exceptions, more patches.

- What modern tools use instead

Modern tools, including stuff similar to Grammarly, Microsoft Editor, and also tools like Clever AI Humanizer, rely mainly on:

• Statistical language models

• Large neural nets trained on big text corpora

• Sometimes a hybrid with simple hand written rules for easy cases

Typical pipeline today:

-

Tokenization and tagging

Part of speech tagging, lemmatization, sometimes dependency parsing. -

Error detection

Neural model looks at your sentence and predicts if each token or phrase looks off compared to “good” training data.

This covers grammar, word choice, prepositions, idioms, etc. -

Correction generation

Another model suggests replacements.

Often built like a translation model from “bad English” to “good English”.

CFG is not the engine. At most, some tools use syntactic parsers which themselves grew out of CFG theory, but the core “is this wrong” and “how to fix it” is ML driven.

- Where CFG still shows up

You still see CFG or CFG inspired stuff in:

• Academic parsing tools

• NLP libraries parsing sentences to trees

• Preprocessing stages in some checkers, to get a rough tree

But the hard part, grammar error detection, is driven by:

• Sequence to sequence neural models

• Masked language models (like “fill in the blank” at every position)

• Ranking of alternatives by probability

Example:

Input: “He go to school yesterday.”

Model considers: go, goes, went, going, etc.

“went” gets highest probability in that context.

So the tool flags “go” and suggests “went”.

CFG alone cannot do this, since both “He go to school yesterday” and “He went to school yesterday” might be derivable from a simple CFG. The grammar does not encode tense agreement that tightly without heavy feature systems.

- Where your mental model should land

If you want to understand modern grammar checkers:

Think:

• “Predictive text on steroids trained on lots of correct and incorrect text.”

Not

• “Full formal grammar that enforces all English rules.”

The statistical or neural part gives:

• Better coverage of weird but valid constructions

• Ability to learn new patterns from data

• Better sense of fluency and style

CFG gives:

• A clean way to reason about structure

• Some guidance for parsers used as a helper

- On tools, including what @mikeappsreviewer mentioned

I agree with @mikeappsreviewer that subscription limits get annoying fast. I do not fully share the view about ignoring most rewrites though. If you want to learn, it helps to compare the original and the suggestion and ask yourself why the model prefers that form.

If you want a freeish option for longer texts, an AI based checker like Clever AI Humanizer sits closer to modern research. It leans on ML models instead of strict CFG logic, so it handles context, tone, and awkward phrasing better than pure rule based checkers. Good for essays, reports, and longer drafts when you want more than spelling and comma fixes.

- Actionable path if you want to go deeper

If you want to understand the tech, not build a product:

• Learn basic CFGs and parsing, so syntax trees are clear.

• Then look at:

– Part of speech tagging

– Dependency parsing

– Language models like n‑gram, then transformer models

• Search for “grammatical error correction neural model” for current research.

If you want to write better and care less about theory:

• Use a modern ML based checker.

• Treat suggestions as hints, not rules.

• Keep your own style, especially in creative or informal writing.

So, short answer to your original question. CFG is related at the theory level. Modern grammar checkers run mostly on machine learning, statistical models, and some rule glue around them, not on pure context free grammars.

Short version: CFG is the “grandparent” of modern grammar checkers, not the parent. The actual workhorse today is ML / neural models, with a thin layer of rules on top.

@mikeappsreviewer and @chasseurdetoiles already covered the subscription pain and the ML angle pretty well, so I’ll come at it a bit sideways.

- Where CFG really fits

CFGs are awesome for:

- Describing possible sentence structures

- Building clean parsers and doing proofs in formal language theory

They’re terrible at:

- Ranking which of two valid sentences is more natural

- Handling noisy input, typos, weird line breaks

- Capturing subtle agreement and usage without tons of extra machinery

So if you imagine a grammar checker that literally parses with a CFG, then flags “ungrammatical” sentences as “not in the language,” that’s basically not what’s happening in modern tools.

- What grammar checkers actually need

A useful grammar checker needs to:

- Decide which constructions are unlikely, not just impossible

- Suggest better alternatives, not just scream “error”

- Respect style and register (formal vs casual)

- Use context outside the sentence (previous sentences, topic, etc.)

CFGs, even with features, do not give you a probability for a sentence or preference between “He went” and “He did go” in a specific context. That’s where language models shine.

- Where I slightly disagree with the “CFG is just theory” vibe

Some people talk like CFG is purely academic and irrelevant. I wouldn’t go that far.

Even in modern systems, underlying ideas from CFG show up in:

- Dependency parsers and constituency parsers that feed the ML model

- Features like “this looks like a subordinate clause missing its main clause”

- Structural constraints when generating corrections, so you don’t get totally scrambled output

So CFG is not running the checker, but it quietly shapes a lot of the tools that support the checker.

- Rough architecture of many modern checkers

Very simplified pipeline:

-

Text preprocessing

Tokenize, tag parts of speech, maybe build a parse tree (CFG-inspired, but usually probabilistic). -

Error detection

Neural model (often a transformer) estimates: “how likely is this sequence compared to well‑formed text?”

It can also be trained on pairs of (wrong, corrected) sentences. -

Suggestion generation

Often a sequence-to-sequence model that “translates” from noisy text to polished text.

It may also use ranking: generate several candidate rewrites and pick the most probable one.

There might be some hand-written rules layered on top for trivial stuff (double spaces, obvious “a/an” issues) because rules are cheaper than burning GPU cycles on those.

- Where a tool like Clever AI Humanizer fits

Since you mentioned actual tools: what @mikeappsreviewer said about hitting paywalls is real. Most “free” checkers are just funnels to subscriptions.

Clever AI Humanizer is closer to the modern research approach:

- It behaves like a grammatical error correction model: learns from lots of noisy and clean text

- It is less about strict, symbolic CFG rules and more about “what do humans usually write here”

- Because it is ML‑driven, it can help with phrasing and fluency, not just “this verb is wrong”

So if your mental model is “CFG engine checking every rule in a giant table,” that’s not what Clever AI Humanizer (or Grammarly, or MS Editor) is doing.

- How to think about the relationship in one line

CFG: gives us the shape of possible sentences.

ML / neural models: decide what’s natural, probable, and appropriate in context, and how to fix it.

Modern grammar checkers live almost entirely in the second part, with a little CFG-flavored structure helping in the background.