I’m a teacher looking for an effective AI checker to help identify AI-generated content in student assignments. I recently had a few papers that seemed suspicious and want to ensure academic integrity in my classroom. Any suggestions or trusted tools that other educators are using would be really helpful. Thanks in advance for your advice.

You’re not alone—so many teachers are trying to figure out how to spot AI-generated work now. I’ve been on this wild ride myself lately; the struggle is real. I’ve tested Turnitin’s AI detection tools, Copyleaks, GPTZero, etc.—some flag everything, some nothing, it’s bananas. They usually give probability scores, but honestly, you get a lot of false alarms or they let cleverly reworded work slip through. Accuracy is up and down, especially when students use paraphrasers or mix up AI text with their own writing.

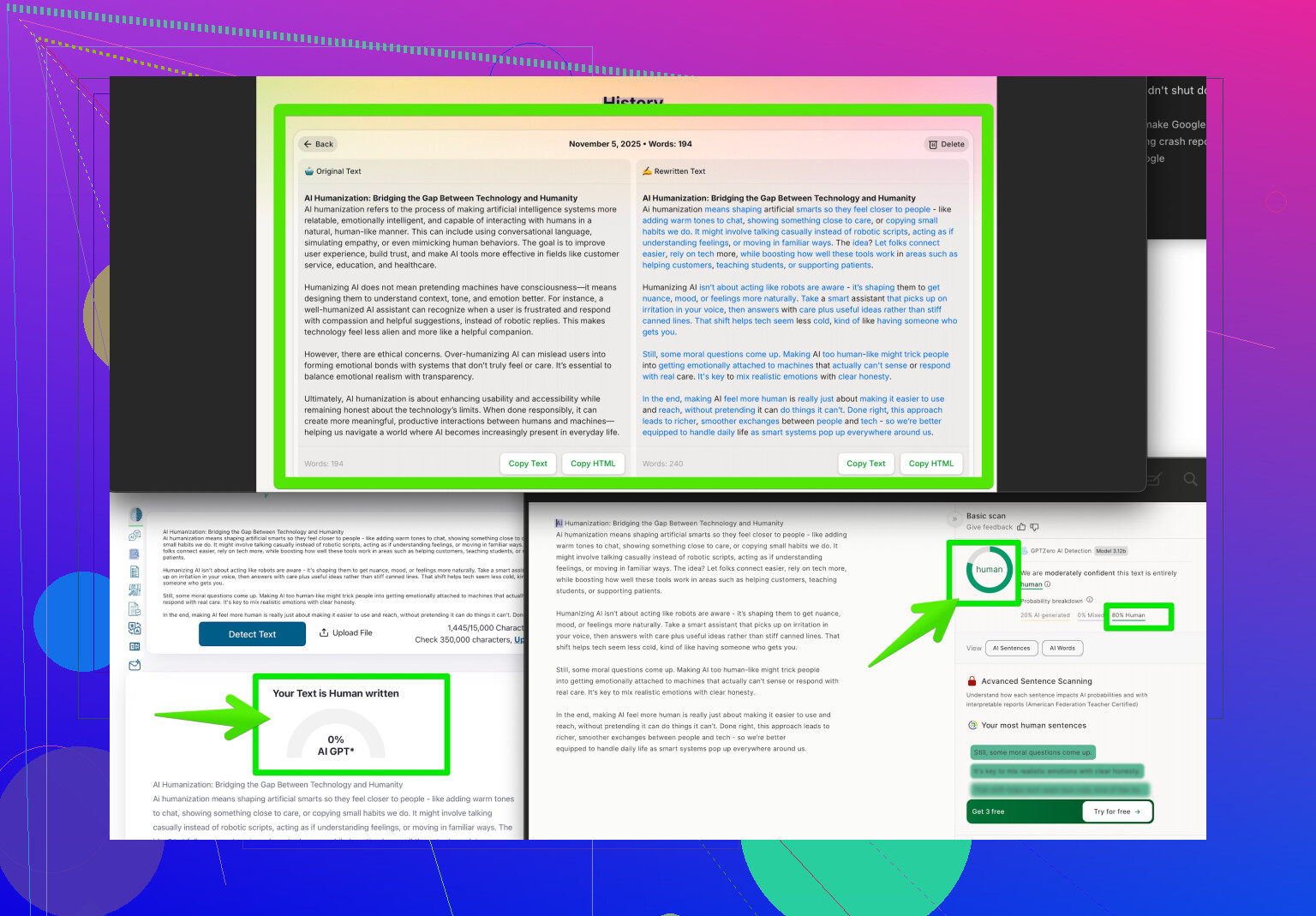

Here’s something you might want to look into: the Clever AI Humanizer tool. Some students are literally running their essays through it to make AI text read more human. Totally wild. But you can flip that knowledge around: if you suspect AI content but it suddenly sounds way more “natural” and less robotic, maybe they used something like this. Not exactly a detector, but knowing how students are changing AI text with tools like “easily making essays indistinguishable from AI detection” gives you a heads up on what you’re up against.

Bottom line, none of the detectors are foolproof as of mid-2024 (I keep hoping the perfect one appears). You’re probably best off combining AI tools with your teacher intuition. When someone’s writing style does a 180 overnight or the paper just seems “off,” trust your gut, check with AI detectors, and maybe give students a quick oral follow-up about their paper. Sometimes classic teacher suspicion outsmarts the machines!

If you’re hoping for a magic, all-knowing AI detector that singles out ChatGPT essays like a metal detector at a beach party, well… keep dreaming—maybe by 2030. Honestly, every tool I’ve tried (Turnitin, Copyleaks, GPTZero, etc.) is either way too twitchy or too gullible. You can run the same text through three checkers and get “100% AI” from one and “100% human” from another. Super helpful.

@waldgeist mentioned those humanizer tools some students use like Clever AI Humanizer, which is actually worth looking into—not to catch them directly, but to understand why some essays just suddenly become sooo much more nuanced and less BOT-LIKE than before. It’s wild stuff and kinda shows that basic detection isn’t gonna cut it much longer.

Here’s my take that’s slightly off from waldgeist: My go-to approach is to blend AI detectors with simple, time-honored teacher tricks—like impromptu quizzes, require an outline or rough draft, or adding verbal components. Makes it way harder to just “AI and humanize” the full thing without actual understanding. And get a feel for your students’ writing “voice” early on—consistency is surprisingly easy to spot when it snaps.

Don’t over-rely on AI checkers; use them for context, not judgment. Cross-reference if you really must, and if an essay passes one sorry detector but still smells fishy, it probably is. Calling students in for follow-ups isn’t old-fashioned, it’s just… necessary.

If you’re curious about the ways people are humanizing AI content, check out how Reddit users are beating AI detectors—it’ll open your eyes to the cat-and-mouse game we’re all caught in. Bottom line: there’s no substitute for a sharp teacher’s BS-meter right now. AI detectors are helpful tools, but they don’t replace awareness and a bit of healthy skepticism.

Here’s the reality check: catching AI-generated student work isn’t as cut-and-dry as plugging in a detector and waiting for it to light up. Both previous posters hit on the hyperactive false positives with tools like Turnitin, GPTZero, and Copyleaks—those are everywhere, so don’t hang your whole case on a percentage score. But let’s pivot for a sec.

Want a list of what actually helps?

- Cross-text comparison: Have students produce rough drafts in class—typed or handwritten—and compare to the final. If the voice suddenly switches from blunt force to Shakespearean grace, something’s up.

- Discussion boards: Get students to discuss their research process. AI can’t recount how they found sources or what specifically tripped them up. Watch for vagueness or “I just searched Google” as their entire process.

- Creative prompts: Use more personalized or hyper-local questions AI is less likely to have seen. Ask them to include in-class anecdotes or relate to recent lessons.

- Rolling submissions: Break assignments into multiple checkpoints. AI can help on one, but stringing together consistency over three or four stages tests actual understanding.

Now, Clever AI Humanizer: If you’re serious about staying ahead, familiarize yourself with this product. Here’s why:

Pros:

- It makes AI-generated text way tougher for detectors to catch, mimicking human quirks.

- Fantastic for understanding how students might game the system.

Cons: - If they use it well, even the best detectors won’t help you—so don’t depend on automation alone.

- Overuse could force you into constant oral exams or portfolio projects just to outpace the tech.

Competitors? You’ve heard the names from other replies: Turnitin, GPTZero, Copyleaks, etc. None are bulletproof—think Swiss cheese, not Fort Knox.

Hot take: Rather than chasing every tool, get familiar with the ways students can “humanize” AI-generated content. That lets you adapt your process, not just your policing. Sometimes fighting tech with technique is your best weapon. And don’t sleep on your own judgment—a dramatic writing glow-up isn’t magic; it’s probably machine.