I’m looking for a GPTHuman AI review to check if my content sounds natural, human-written, and trustworthy. I’ve been using AI tools and I’m worried the tone or structure might give it away. Can someone review it, point out what feels “AI-like,” and suggest how to fix it so it reads more authentically?

GPTHuman AI Review, from someone who spent too long testing it

GPTHuman AI Review

I saw GPTHuman pushing the line about being “the only AI humanizer that bypasses all premium AI detectors” and decided to put it through my usual test set. That slogan did not hold up.

I used the same three samples I always use for humanizer tests. Took raw LLM content, ran each through GPTHuman, then threw the outputs at a few detectors.

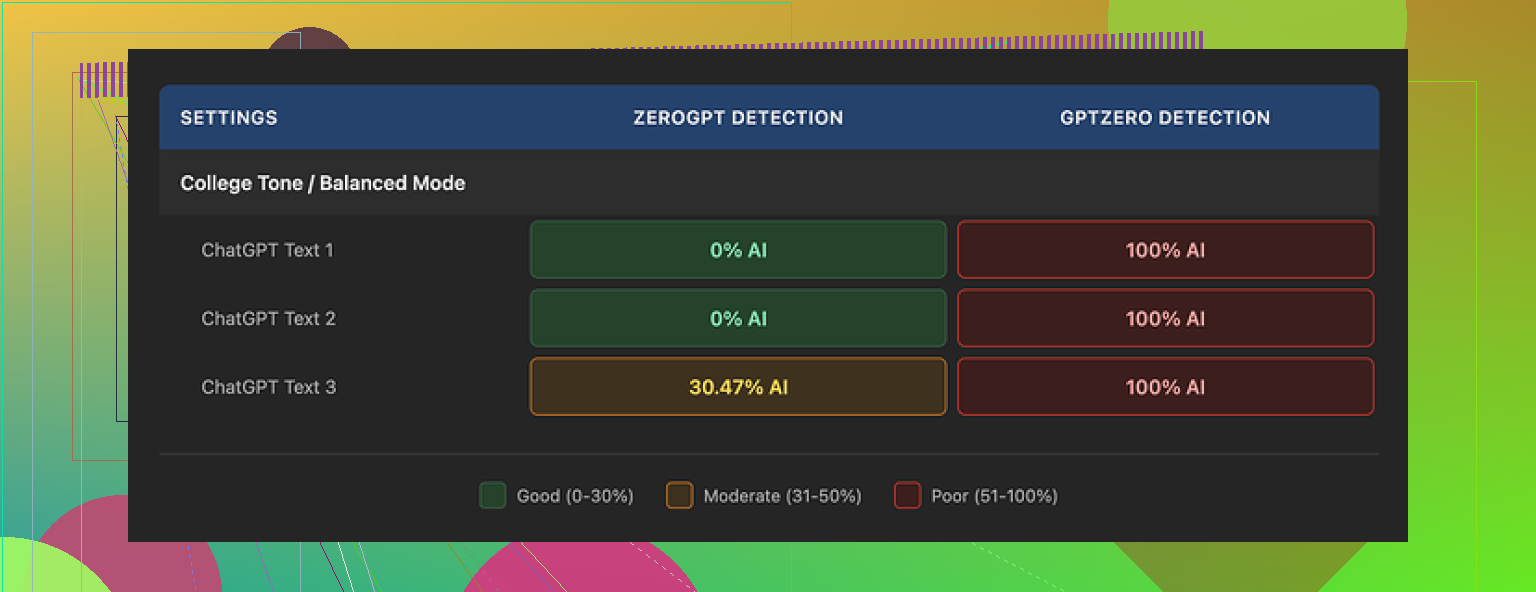

Here is what happened:

• GPTZero flagged every single GPTHuman output as 100% AI. No edge cases, no borderline calls. All three.

• ZeroGPT let two of the samples slide with a 0% AI score, but the third one landed around 30% AI, which is not what I would trust for anything serious.

Inside GPTHuman, their own “human score” was high for all three runs. Those numbers did not match what external tools reported at all. So if you rely on that internal score, you get a false sense of safety.

On top of that, the text itself looked off in ways that will bother anyone who writes a lot.

Here are some specific issues I ran into:

• Subject and verb out of sync. Things like “the results shows” and “the users is” slipped in.

• Sentences cut off halfway through, like it tried to rephrase and then abandoned the thought.

• Word swaps that changed meaning or made no sense in context. You see this when it tries to avoid repetition and picks the wrong synonym.

• Closings that read like a machine stitched together three different endings. Hard to follow, and not something you would send to a client or professor.

To be fair, the formatting looked fine. Paragraphs were clean, spacing was okay, and it did not spam weird lists or broken markdown. If you skim it fast, it looks human enough, but reading line by line, the grammar breaks stand out.

Now the part that annoyed me more than the grammar: the limits and terms.

Here is how the free tier behaved for me:

• Total of 300 words across all runs. Not 300 per run, 300 in total.

• After hitting that cap, it blocked further usage.

• I ended up registering three separate Gmail accounts to finish my set of tests. That was the only way to get enough tokens through it without paying.

On the paid side, the plans looked like this when I checked:

• Starter plan: from $8.25 per month if billed yearly.

• Unlimited plan: $26 per month.

• Even with “Unlimited”, single outputs are capped at 2,000 words per run.

So if you want to run long-form content like full articles, reports, or theses, you have to chunk them, which makes consistency harder and is a pain to manage.

The terms raised some flags too:

• No refunds on purchases. Once you pay, that is it.

• Your text is used for AI training by default. There is an opt-out, but you need to dig into the settings and explicitly disable it.

• They reserve the right to use your company name in their marketing. You have to contact them and tell them not to if you do not want that.

If you deal with sensitive docs or client work, that training-by-default thing matters. I would not run anything confidential through a tool on those terms.

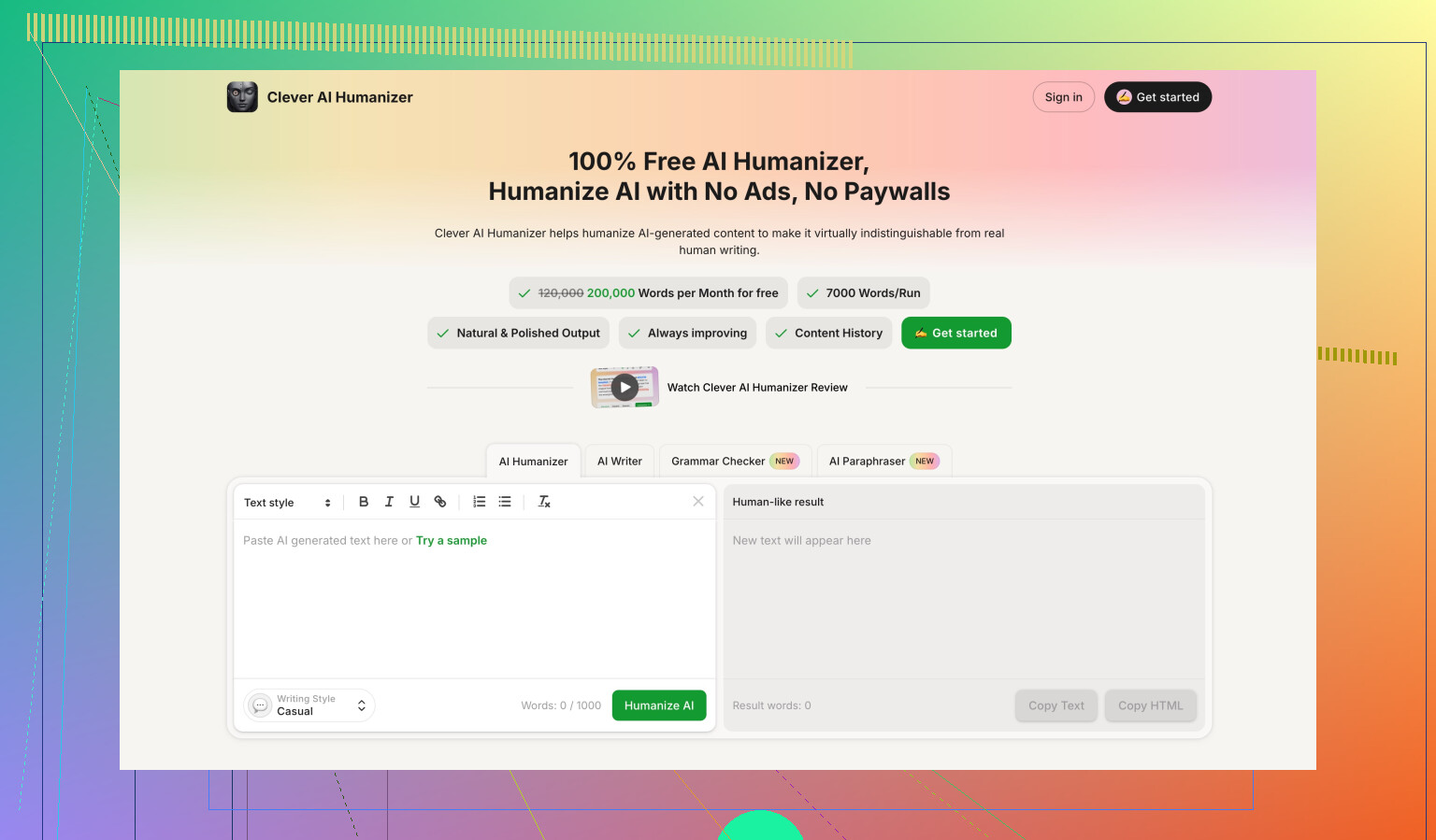

For context, during the same testing round I also ran my samples through Clever AI Humanizer. Results here, if you want to see details and screenshots:

In my runs, Clever AI Humanizer scored stronger on external detectors and stayed fully free at the time, without the tiny word cap or the forced account hopping. That does not make it perfect, but if you only care about detection scores and access, it performed better for me than GPTHuman.

So if you plan to use GPTHuman for school, client work, or blogs that get checked by tools like GPTZero, I would test with your own samples and detectors before paying, and definitely do not rely on the internal “human score” as proof of anything.

If your goal is “does my content feel human and trustworthy,” I’d stop thinking in terms of GPTHuman vs detectors and start thinking in terms of edits and signals real readers notice.

A few angles you can use that do not depend on one tool:

-

Human signals you want in your text

• Occasional first person or clear point of view

• Specific details or examples from your experience, not generic statements

• Small hedges and uncertainty, like “I’m not sure this fits…” instead of absolute claims

• Variation in sentence length, but no weird swings into super stiff phrasingIf your content reads like a manual, flattens emotion, and never shows doubt, people tag it as AI even without detectors.

-

Structural checks

Read your piece out loud. Anywhere you trip or feel bored, mark it. That is usually where the AI tone shows.

Look for:

• Repeated phrases at paragraph starts

• “On the other hand” every other sentence

• Overuse of lists where you would normally write a short paragraph -

Edits that hide AI fingerprints

• Rewrite the intro and the conclusion by hand. These are where detectors and humans focus.

• Replace generic transitions:

“Additionally” → “Also”

“Moreover” → delete or “Plus”

• Add one short personal line every few paragraphs. For example “When I tried this, I messed it up the first time because I rushed the setup.” -

About GPTHuman specifically

I agree with @mikeappsreviewer on the mismatch between GPTHuman’s internal “human score” and external tools like GPTZero. I have seen the same pattern with other “humanizers” too, so I do not treat any internal score as proof of safety.

I am a bit less harsh on small grammar slips though. Some humans write like that all day. The problem is when the errors feel random and context blind, which GPTHuman outputs often do. -

If you still want a humanizer tool

• Test your own samples on your own detectors before you pay anything.

• Run a short paragraph, see how it edits your style. If it nukes your voice, skip it.

• Clever Ai Humanizer is worth testing in parallel, especially if you care about external detector scores and do not want to deal with tiny word caps. Still do a manual pass after. Tools do not understand your intent or your audience. -

Simple manual “humanization” workflow

Use this on anything you wrote with AI tools:

• Step 1, delete the first and last paragraph. Rewrite them from scratch with your own hook and takeaway.

• Step 2, delete at least 20 percent of transitional fluff like “in addition,” “as a result,” “on the other hand.” Your text gets sharper fast.

• Step 3, add one specific detail per section. A number, a short anecdote, or a concrete scenario.

• Step 4, read aloud once and fix spots where you hear the robot voice.

If you want, paste a section of your content and I will point out the parts that sound AI-ish and how to tweak them.

Short version: GPTHuman isn’t going to magically make your text “undetectable,” and if you rely on it alone your stuff will still feel AI-ish to real readers.

I’m with @mikeappsreviewer and @vrijheidsvogel on most of their points, especially the mismatch between GPTHuman’s “human score” and external detectors. Where I’ll push back a bit is: obsessing over detector scores is kind of missing the point. Detectors are noisy, constantly playing catch‑up, and they can flag legit human writing too. You can burn hours chasing a green score and still end up with bland, soulless text.

If your goal is “natural, human-written, trustworthy,” here’s what I’d actually do:

-

Don’t outsource your voice

GPTHuman rewrites tend to smear everything into the same beige tone. That might trick a casual skim, but anyone who knows you (teacher, manager, long‑time readers) will notice your voice randomly changed overnight. That mismatch is a bigger red flag than a detector number.

Use AI to draft or brainstorm, but keep your own phrasing on:- Jokes or side comments

- Opinions and rants

- Any personal story or example

-

Add signals no “humanizer” is great at

These are the things that make people trust you more than any tool:- Tiny, slightly messy opinions: “Honestly, this part still confuses me sometimes.”

- Specific, real details: “I tried this on a 2,000 word lab report for my stats class…”

- Small contradictions: “On one hand I like X, but I’ve also seen it backfire when…”

GPTHuman tends to iron out that messiness, which ironically makes you more machine-like.

-

Use a split approach instead of full auto-humanizing

Instead of dumping the whole piece into GPTHuman, try:- Keep your intro and conclusion purely your own. That’s where tone really matters.

- If you use a humanizer (GPTHuman or Clever Ai Humanizer), limit it to stiff middle sections that read like a manual. Then re-stitch everything by hand so it flows.

- After that, read once purely for vibe: “Would I actually talk like this to a friend?” If no, tweak the wording, not the structure.

-

Manual “trust” checks that matter more than detectors

When you’re worried “this sounds AI,” ask yourself:- Did I say anything that could be wrong or debatable? Perfectly safe, polished takes often scream AI.

- Are there any small, specific risks or downsides mentioned? AI text loves one‑sided benefits.

- Did I leave in one or two informal bits: “kinda”, “to be fair”, “this part sucks if you’re new to it”?

It’s totally fine if your piece has a couple of “flaws” as long as they match how you actually write. Detectors don’t value that, readers do.

-

About the tools themselves

- GPTHuman: grammar glitches + tight limits + default data training is a rough combo, especially if your content isn’t public. Some of the errors it makes are the type teachers now expect from “AI trying to act human.”

- Clever Ai Humanizer: if you really want a “humanizer,” this one is worth testing side‑by‑side, mostly because it tends to keep things closer to readable and plays nicer with detectors in a lot of cases. Still, I’d treat it like a helper, not a magic filter.

If you want actual eyes on your writing, paste a paragraph or two of what you’re worried about (no need for the full thing), and I can point out exactly which lines scream “AI” and which ones already feel human so you’re not guessing in the dark.

Short version: GPTHuman is fine for quick tinkering, but if your goal is “passes a casual human sniff test,” you’re better off combining a light tool pass with a couple of brutal manual edits.

Where I slightly disagree with @vrijheidsvogel, @boswandelaar and @mikeappsreviewer: I wouldn’t spend much energy on structured “step 1, 2, 3” humanization every time. That can make everything feel formulaic in a different way. Instead, I’d attack your content from three angles that tools are bad at faking:

1. Voice continuity check

Read a few old things you wrote before you used AI: messages, essays, whatever. Then compare:

- Do you normally use short, choppy sentences or longer sprawling ones

- Do you swear, use slang, or weird metaphors

- Do you lean on questions like “So what does that mean for you”

If your new piece suddenly sounds like a calm textbook, that shift itself is a giveaway. Fixing this usually means:

- Reintroducing one or two of your normal quirks per section

- Letting a bit of repetition stay, because real humans repeat themselves

2. “Friction points” instead of generic personalization

Everyone already mentioned “add personal examples.” The problem is that people then bolt on fake-sounding stories.

More reliable: add friction points.

- Where did you actually struggle with the topic

- What did you find boring, confusing, or overrated

- Where do you disagree with common advice, even slightly

One line like “I tried three tools for this and honestly hated the first two because they slowed me down” feels far more human than a generic “for example, you could use various tools.”

3. Intent clarity pass

AI text often feels like it is trying to be helpful to everyone at once. That vagueness is a huge tell.

Ask yourself for each section:

- If a friend asked me this question in chat, what single thing would I want them to walk away with

- Is there one sentence here that sounds like I am taking a side or making a tradeoff clear

Sharpening intent is where even something like Clever Ai Humanizer can help, if you use it carefully.

Where Clever Ai Humanizer fits in

Since you mentioned tools like GPTHuman, here is how I would realistically use Clever Ai Humanizer, with pros and cons.

Pros

- Generally does a cleaner rewrite than GPTHuman in my experience, with fewer random grammar glitches

- Can reduce the stiff “corporate” tone without turning everything into fluff

- Tends to cooperate better with common AI detectors than raw LLM output

- Useful if English is not your first language and you want smoother phrasing as a base to edit

Cons

- It can still sand off your personal edge and make everything sound like a competent stranger wrote it

- If you accept its output wholesale, your writing may start to feel samey across different topics

- Still not something I’d trust with confidential material, same reason as any third party tool

- You must do a final pass yourself or it will keep tiny “tells” like overly neat structure

So if you want to involve Clever Ai Humanizer at all, I would:

- Draft however you like (your own text or a base AI draft).

- Run only the most robotic middle chunks through Clever Ai Humanizer.

- Reattach your own intro and conclusion.

- Add 2 or 3 friction points and one small, genuine opinion that could be wrong.

That keeps the readability boost, helps with detectors somewhat, and still lets your own voice and imperfections show through.

If you want, paste 2 or 3 paragraphs you are unsure about. I will mark specific sentences that feel “AI-bright” and show you exactly how I would roughen them up without wrecking the meaning.